Note

Go to the end to download the full example code.

Transfer Learning for Computer Vision Tutorial#

Created On: Mar 24, 2017 | Last Updated: Jan 27, 2025 | Last Verified: Nov 05, 2024

Author: Sasank Chilamkurthy

In this tutorial, you will learn how to train a convolutional neural network for image classification using transfer learning. You can read more about the transfer learning at cs231n notes

Quoting these notes,

In practice, very few people train an entire Convolutional Network from scratch (with random initialization), because it is relatively rare to have a dataset of sufficient size. Instead, it is common to pretrain a ConvNet on a very large dataset (e.g. ImageNet, which contains 1.2 million images with 1000 categories), and then use the ConvNet either as an initialization or a fixed feature extractor for the task of interest.

These two major transfer learning scenarios look as follows:

Finetuning the ConvNet: Instead of random initialization, we initialize the network with a pretrained network, like the one that is trained on imagenet 1000 dataset. Rest of the training looks as usual.

ConvNet as fixed feature extractor: Here, we will freeze the weights for all of the network except that of the final fully connected layer. This last fully connected layer is replaced with a new one with random weights and only this layer is trained.

# License: BSD

# Author: Sasank Chilamkurthy

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torch.backends.cudnn as cudnn

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

from PIL import Image

from tempfile import TemporaryDirectory

cudnn.benchmark = True

plt.ion() # interactive mode

<contextlib.ExitStack object at 0x7f1c90e24640>

Load Data#

We will use torchvision and torch.utils.data packages for loading the data.

The problem we’re going to solve today is to train a model to classify ants and bees. We have about 120 training images each for ants and bees. There are 75 validation images for each class. Usually, this is a very small dataset to generalize upon, if trained from scratch. Since we are using transfer learning, we should be able to generalize reasonably well.

This dataset is a very small subset of imagenet.

Note

Download the data from here and extract it to the current directory.

# Data augmentation and normalization for training

# Just normalization for validation

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

data_dir = 'data/hymenoptera_data'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x),

data_transforms[x])

for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4,

shuffle=True, num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

# We want to be able to train our model on an `accelerator <https://pytorch.org/docs/stable/torch.html#accelerators>`__

# such as CUDA, MPS, MTIA, or XPU. If the current accelerator is available, we will use it. Otherwise, we use the CPU.

device = torch.accelerator.current_accelerator().type if torch.accelerator.is_available() else "cpu"

print(f"Using {device} device")

Using cuda device

Visualize a few images#

Let’s visualize a few training images so as to understand the data augmentations.

def imshow(inp, title=None):

"""Display image for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

# Get a batch of training data

inputs, classes = next(iter(dataloaders['train']))

# Make a grid from batch

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

![['bees', 'bees', 'ants', 'ants']](../_images/sphx_glr_transfer_learning_tutorial_001.png)

Training the model#

Now, let’s write a general function to train a model. Here, we will illustrate:

Scheduling the learning rate

Saving the best model

In the following, parameter scheduler is an LR scheduler object from

torch.optim.lr_scheduler.

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

# Create a temporary directory to save training checkpoints

with TemporaryDirectory() as tempdir:

best_model_params_path = os.path.join(tempdir, 'best_model_params.pt')

torch.save(model.state_dict(), best_model_params_path)

best_acc = 0.0

for epoch in range(num_epochs):

print(f'Epoch {epoch}/{num_epochs - 1}')

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statistics

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print(f'{phase} Loss: {epoch_loss:.4f} Acc: {epoch_acc:.4f}')

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

torch.save(model.state_dict(), best_model_params_path)

print()

time_elapsed = time.time() - since

print(f'Training complete in {time_elapsed // 60:.0f}m {time_elapsed % 60:.0f}s')

print(f'Best val Acc: {best_acc:4f}')

# load best model weights

model.load_state_dict(torch.load(best_model_params_path, weights_only=True))

return model

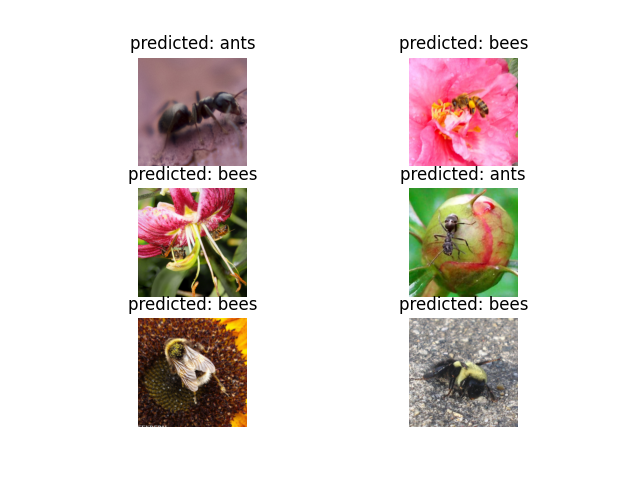

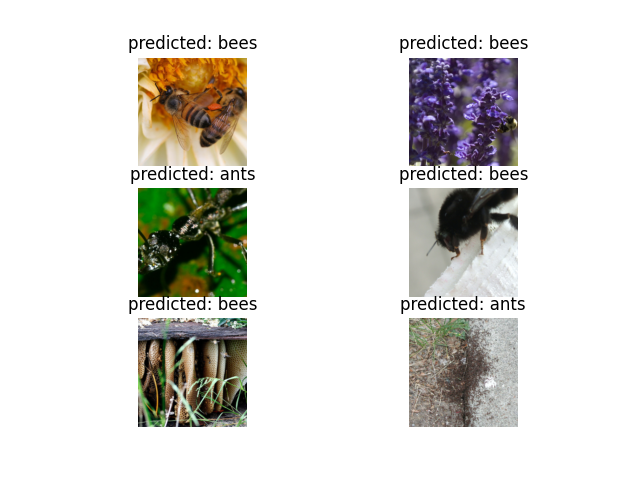

Visualizing the model predictions#

Generic function to display predictions for a few images

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images//2, 2, images_so_far)

ax.axis('off')

ax.set_title(f'predicted: {class_names[preds[j]]}')

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(mode=was_training)

Finetuning the ConvNet#

Load a pretrained model and reset final fully connected layer.

model_ft = models.resnet18(weights='IMAGENET1K_V1')

num_ftrs = model_ft.fc.in_features

# Here the size of each output sample is set to 2.

# Alternatively, it can be generalized to ``nn.Linear(num_ftrs, len(class_names))``.

model_ft.fc = nn.Linear(num_ftrs, 2)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

Downloading: "https://download.pytorch.org/models/resnet18-f37072fd.pth" to /var/lib/ci-user/.cache/torch/hub/checkpoints/resnet18-f37072fd.pth

0%| | 0.00/44.7M [00:00<?, ?B/s]

93%|█████████▎| 41.8M/44.7M [00:00<00:00, 437MB/s]

100%|██████████| 44.7M/44.7M [00:00<00:00, 437MB/s]

Train and evaluate#

It should take around 15-25 min on CPU. On GPU though, it takes less than a minute.

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler,

num_epochs=25)

Epoch 0/24

----------

train Loss: 0.5903 Acc: 0.7008

val Loss: 0.2170 Acc: 0.9216

Epoch 1/24

----------

train Loss: 0.4710 Acc: 0.8156

val Loss: 0.2947 Acc: 0.9020

Epoch 2/24

----------

train Loss: 0.4788 Acc: 0.8033

val Loss: 0.2117 Acc: 0.9412

Epoch 3/24

----------

train Loss: 0.4692 Acc: 0.8238

val Loss: 0.1701 Acc: 0.9346

Epoch 4/24

----------

train Loss: 0.4088 Acc: 0.8361

val Loss: 0.3131 Acc: 0.8693

Epoch 5/24

----------

train Loss: 0.4055 Acc: 0.8320

val Loss: 0.2013 Acc: 0.9281

Epoch 6/24

----------

train Loss: 0.3931 Acc: 0.8648

val Loss: 0.4235 Acc: 0.8366

Epoch 7/24

----------

train Loss: 0.3563 Acc: 0.8156

val Loss: 0.3095 Acc: 0.9020

Epoch 8/24

----------

train Loss: 0.2656 Acc: 0.9098

val Loss: 0.3170 Acc: 0.9020

Epoch 9/24

----------

train Loss: 0.3295 Acc: 0.8566

val Loss: 0.2846 Acc: 0.8954

Epoch 10/24

----------

train Loss: 0.2747 Acc: 0.8770

val Loss: 0.2425 Acc: 0.9216

Epoch 11/24

----------

train Loss: 0.2622 Acc: 0.8607

val Loss: 0.2274 Acc: 0.9085

Epoch 12/24

----------

train Loss: 0.2942 Acc: 0.8689

val Loss: 0.2726 Acc: 0.8889

Epoch 13/24

----------

train Loss: 0.2743 Acc: 0.8770

val Loss: 0.1998 Acc: 0.9085

Epoch 14/24

----------

train Loss: 0.2348 Acc: 0.8852

val Loss: 0.1951 Acc: 0.9020

Epoch 15/24

----------

train Loss: 0.2979 Acc: 0.8689

val Loss: 0.2160 Acc: 0.8889

Epoch 16/24

----------

train Loss: 0.2774 Acc: 0.8730

val Loss: 0.2210 Acc: 0.9020

Epoch 17/24

----------

train Loss: 0.2253 Acc: 0.9180

val Loss: 0.2172 Acc: 0.9020

Epoch 18/24

----------

train Loss: 0.2654 Acc: 0.8648

val Loss: 0.2134 Acc: 0.9216

Epoch 19/24

----------

train Loss: 0.3400 Acc: 0.8607

val Loss: 0.2012 Acc: 0.9150

Epoch 20/24

----------

train Loss: 0.2938 Acc: 0.8811

val Loss: 0.2054 Acc: 0.9216

Epoch 21/24

----------

train Loss: 0.2120 Acc: 0.9016

val Loss: 0.2008 Acc: 0.9281

Epoch 22/24

----------

train Loss: 0.2631 Acc: 0.8975

val Loss: 0.2023 Acc: 0.9085

Epoch 23/24

----------

train Loss: 0.3325 Acc: 0.8197

val Loss: 0.1949 Acc: 0.9281

Epoch 24/24

----------

train Loss: 0.2967 Acc: 0.8770

val Loss: 0.2071 Acc: 0.8954

Training complete in 0m 36s

Best val Acc: 0.941176

visualize_model(model_ft)

ConvNet as fixed feature extractor#

Here, we need to freeze all the network except the final layer. We need

to set requires_grad = False to freeze the parameters so that the

gradients are not computed in backward().

You can read more about this in the documentation here.

model_conv = torchvision.models.resnet18(weights='IMAGENET1K_V1')

for param in model_conv.parameters():

param.requires_grad = False

# Parameters of newly constructed modules have requires_grad=True by default

num_ftrs = model_conv.fc.in_features

model_conv.fc = nn.Linear(num_ftrs, 2)

model_conv = model_conv.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that only parameters of final layer are being optimized as

# opposed to before.

optimizer_conv = optim.SGD(model_conv.fc.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_conv, step_size=7, gamma=0.1)

Train and evaluate#

On CPU this will take about half the time compared to previous scenario. This is expected as gradients don’t need to be computed for most of the network. However, forward does need to be computed.

model_conv = train_model(model_conv, criterion, optimizer_conv,

exp_lr_scheduler, num_epochs=25)

Epoch 0/24

----------

train Loss: 0.6331 Acc: 0.6434

val Loss: 0.2510 Acc: 0.9216

Epoch 1/24

----------

train Loss: 0.6771 Acc: 0.6967

val Loss: 0.2352 Acc: 0.9020

Epoch 2/24

----------

train Loss: 0.4537 Acc: 0.8197

val Loss: 0.2055 Acc: 0.9412

Epoch 3/24

----------

train Loss: 0.4050 Acc: 0.8033

val Loss: 0.1749 Acc: 0.9281

Epoch 4/24

----------

train Loss: 0.6083 Acc: 0.7746

val Loss: 0.1692 Acc: 0.9412

Epoch 5/24

----------

train Loss: 0.4104 Acc: 0.8361

val Loss: 0.2291 Acc: 0.9281

Epoch 6/24

----------

train Loss: 0.3597 Acc: 0.8320

val Loss: 0.1661 Acc: 0.9477

Epoch 7/24

----------

train Loss: 0.3475 Acc: 0.8648

val Loss: 0.1690 Acc: 0.9477

Epoch 8/24

----------

train Loss: 0.3004 Acc: 0.8893

val Loss: 0.2063 Acc: 0.9477

Epoch 9/24

----------

train Loss: 0.3520 Acc: 0.8648

val Loss: 0.1741 Acc: 0.9412

Epoch 10/24

----------

train Loss: 0.3576 Acc: 0.8402

val Loss: 0.1614 Acc: 0.9542

Epoch 11/24

----------

train Loss: 0.3188 Acc: 0.8525

val Loss: 0.1865 Acc: 0.9542

Epoch 12/24

----------

train Loss: 0.3279 Acc: 0.8648

val Loss: 0.1853 Acc: 0.9542

Epoch 13/24

----------

train Loss: 0.2537 Acc: 0.8893

val Loss: 0.2184 Acc: 0.9216

Epoch 14/24

----------

train Loss: 0.3280 Acc: 0.8607

val Loss: 0.1771 Acc: 0.9608

Epoch 15/24

----------

train Loss: 0.3321 Acc: 0.8648

val Loss: 0.1889 Acc: 0.9412

Epoch 16/24

----------

train Loss: 0.2804 Acc: 0.8730

val Loss: 0.1907 Acc: 0.9477

Epoch 17/24

----------

train Loss: 0.3353 Acc: 0.8443

val Loss: 0.2022 Acc: 0.9412

Epoch 18/24

----------

train Loss: 0.2714 Acc: 0.8852

val Loss: 0.1664 Acc: 0.9477

Epoch 19/24

----------

train Loss: 0.3275 Acc: 0.8156

val Loss: 0.1865 Acc: 0.9542

Epoch 20/24

----------

train Loss: 0.3696 Acc: 0.8443

val Loss: 0.1755 Acc: 0.9608

Epoch 21/24

----------

train Loss: 0.2963 Acc: 0.8607

val Loss: 0.1840 Acc: 0.9477

Epoch 22/24

----------

train Loss: 0.3033 Acc: 0.8730

val Loss: 0.1836 Acc: 0.9477

Epoch 23/24

----------

train Loss: 0.3573 Acc: 0.8361

val Loss: 0.1755 Acc: 0.9608

Epoch 24/24

----------

train Loss: 0.3503 Acc: 0.8648

val Loss: 0.1618 Acc: 0.9477

Training complete in 0m 28s

Best val Acc: 0.960784

visualize_model(model_conv)

plt.ioff()

plt.show()

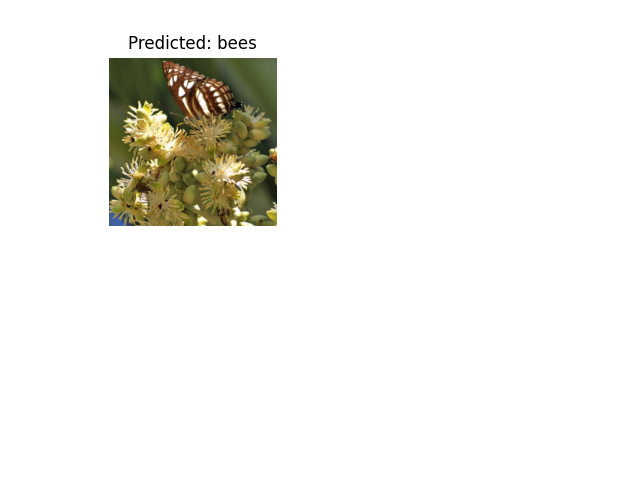

Inference on custom images#

Use the trained model to make predictions on custom images and visualize the predicted class labels along with the images.

def visualize_model_predictions(model,img_path):

was_training = model.training

model.eval()

img = Image.open(img_path)

img = data_transforms['val'](img)

img = img.unsqueeze(0)

img = img.to(device)

with torch.no_grad():

outputs = model(img)

_, preds = torch.max(outputs, 1)

ax = plt.subplot(2,2,1)

ax.axis('off')

ax.set_title(f'Predicted: {class_names[preds[0]]}')

imshow(img.cpu().data[0])

model.train(mode=was_training)

visualize_model_predictions(

model_conv,

img_path='data/hymenoptera_data/val/bees/72100438_73de9f17af.jpg'

)

plt.ioff()

plt.show()

Further Learning#

If you would like to learn more about the applications of transfer learning, checkout our Quantized Transfer Learning for Computer Vision Tutorial.

Total running time of the script: (1 minutes 6.492 seconds)